Is Shift-Left Dead—Or Just Getting Smarter with AI

By Ali Naqvi

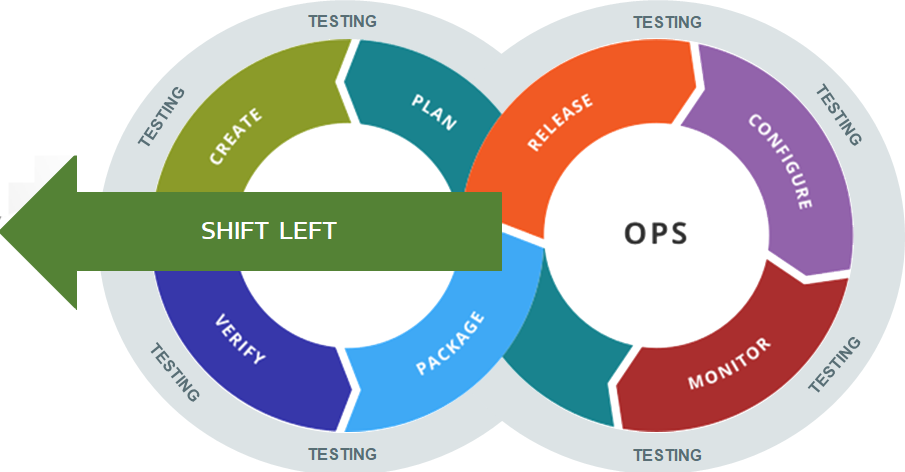

A debate has started recently about whether Shift-Left is still needed and relevant. Shift-Left means integrating security into the software development lifecycle (SDLC) to find and fix vulnerabilities earlier in the development cycle. This proactive approach finds and fixes security vulnerabilities before they go further into the development process, reducing remediation costs and risks. However, with the rapid advancement in AI, some say AI can replace traditional Shift-Left practices. So, the question is: is shift-left security still needed, or can AI make it obsolete?

The primary argument is that advanced AI systems can identify and rectify vulnerabilities more quickly and accurately than ever, making the need to shift left less critical. A recent example is Google’s AI agent, Big Sleep, which uncovered an exploitable stack buffer underflow in SQLite, a widely used open-source database engine. This is the first instance of an AI agent discovering a new, exploitable memory-safety flaw in real-world software. What Big Sleep has achieved is the replication of a security researcher's workflow, enhancing the efficiency and effectiveness of security evaluations. The benefit of this is faster patching processes, which minimize the exposure window to potential threats. Eventually, some experts believe this will be the future in which AI handles security threats with minimal input from security engineers or developers.

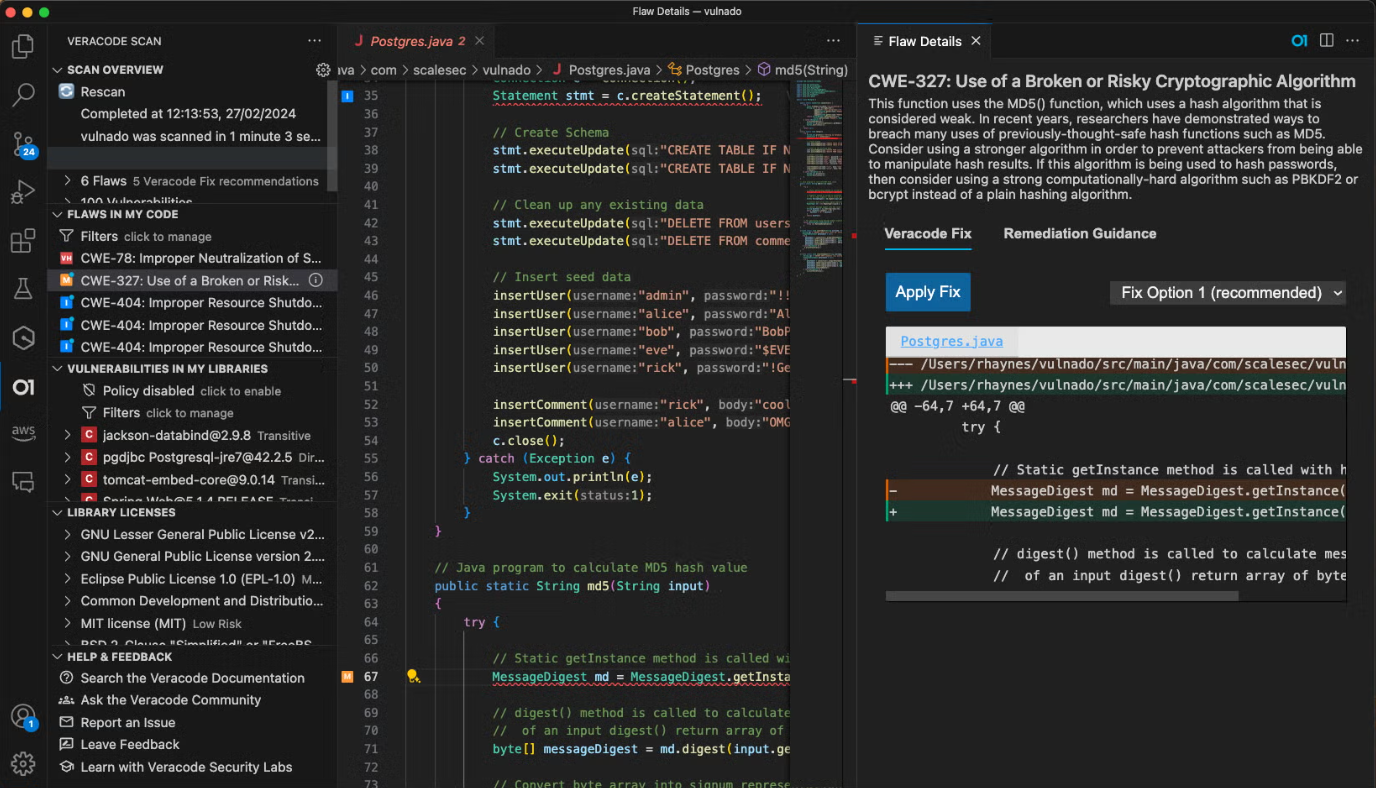

The counterargument, which is where we need to be with using AI, is to implement AI in Shift-Left. This will help developers address security faster. By integrating AI-powered tools into the development process, developers receive real-time feedback on vulnerabilities, allowing them to fix issues more quickly and effectively while offloading the burden from developers so they can focus on core development while security is taken care of. Many appsec companies like Veracode and Snyk are implementing various methods, such as enabling developers to write secure code in the IDE in a co-pilot manner that aligns with the Shift-Left philosophy. They also incorporate ways to scan for and identify vulnerabilities and add policies to break builds. This enables the use of AI in the Shift-Left capacity to help secure the early stages of development cycles.

The debate becomes more relevant when considering the wide use of open-source libraries and third-party packages in modern software development. These components speed up development and add functionality but introduce significant security risks because they come from outside sources and may contain vulnerabilities. Developers have limited control over these components, so security needs to be implemented early in the development process. Implementing AI in the Shift-Left approach can provide automated monitoring and vulnerability detection for these external packages, ensuring security is maintained without adding extra burden to developers.

Conclusion:

AI will improve application security, but Shift-Left principles remain the same. The idea that AI can replace early-stage security is still in its infancy and has a long way to go before it can be a standalone solution. So, integrating AI into Shift-Left is the more practical and effective approach today. This addresses the changing security landscape and allows developers to secure their apps quickly. In short, Shift-Left is here to stay; it just needs a bit of AI love.